Addressing crawlability issues can cause search engines to fail to find, access, and index your webpages, and this has a direct effect on your ranking. No matter how high the quality of the content is, it will not work well unless it is crawled appropriately. Nobody can be sure about what crawlability is, which problems can exist, and the appropriate remedies to apply to improve crawl efficiency, enhance visibility, and enhance long-term SEO growth.

What Are Crawlability Problems?

Crawlability problems are technical or structural problems that make search engine bots less efficient in visiting and navigating your site.

Definition of crawlability

The crawlability is the ease with which search engines such as Google can crawl and scan your pages on the Web. When the bots are unable to access your pages, they will not be displayed in search results.

Hindered or curtailed access

In some cases, websites may block bots accidentally with robots.txt rules, meta tags, or security measures. This makes crucial pages unseen by search engines.

Problems with navigation and accessibility

Bots might not be able to reach all pages due to poor structure, the lack of links, or complicated navigation.

Technical SEO barriers

Such mistakes as dead links, redirect loops, or server failures are big crawlability issues halting bots in their tracks as they crawl your site.

What is crawlable in a website

A crawlable website includes a simple design, speedy loading, easy linkages, correct indexing indicators, and no prohibition to search engine robots.

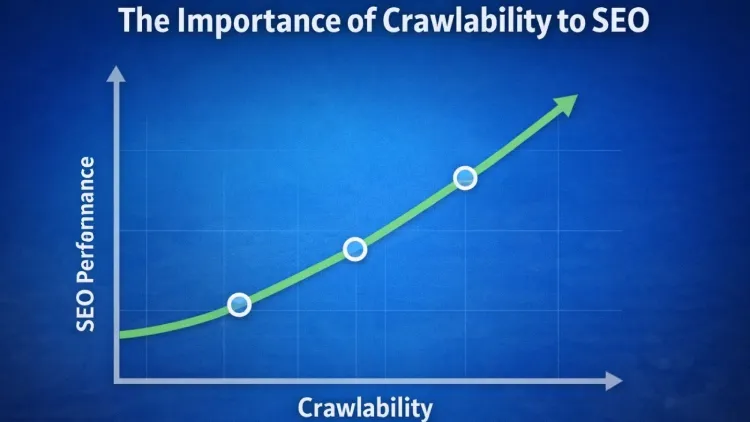

The importance of crawlability to SEO

Search engine optimization is based on crawlability. Nothing can work without it.

Essential for indexing

They ought to be crawled by search engines and then indexed. Assuming crawlability issues, your content will not be added to the search database at all.

Direct influence of rankings

Crawling is done properly so that all the significant pages are found, tested, and ranked accordingly.

Enhances crawl budget effectiveness

Search engines do not have many resources to crawl your site. Resolving crawlability issues will make sure that bots only spend their time on useful pages.

Understands technical pillars of SEO

Technical SEO is one of the pillars of SEO and includes the crawlability aspect.

Helps search engines get to know your site

A properly designed and search button-friendly site enables the bots to make sense of what you have to say and rank with pertinent queries.

Boost Your Website’s Crawlability Today!

Crawlability is the backbone of SEO—without it, even the best content can’t rank. At TechishWeb, we specialize in fixing crawlability problems, optimizing site structure, and ensuring search engines can fully access and index your pages.

Common Crawlability Problems

1. Robots.txt Blocking Important pages

- Poorly set robots.txt files may blacklist critical pages (such as product pages or blog posts).

- It is among the most prevalent crawlability issues as it might eliminate pages from search results.

2. Broken Links (404 Errors)

- Broken links cause bots to crawl nothing and waste crawl budget and efficiency of a site.

- Such errors are also detrimental to user experience and trust.

3. Slows down Web Page speed

- Slow websites do not encourage bots to crawl more than one page.

- This problem is even more important as page speed is also a ranking component.

4. Chains & Loops redirect

- Several redirects disorient crawlers and lower the efficiency.

- The access to a page can be fully blocked by the presence of infinite loops.

5-Bad Internal Linking

- Bots will not be able to find more pages without suitable internal connections.

- Search engines tend to ignore orphan pages (pages with no links).

6. Issue of duplication

- Duplicates cause complications regarding which version to crawl and rank.

- This decreases general crawl efficiency and ranking indicators.

7. No XML Sitemap

- The search engines can skip significant pages without the sitemap.

- A sitemap serves as a map to the crawlers.

8. Server problems (5xx Issues)

- Errors or downtime of the server mean bots cannot access your site.

- Half-closed errors may decrease crawl frequency as time goes by.

9. Crawl Traps

- Bots can find themselves in unending loops of infinite URLs, filters, or session IDs.

- This squanders crawl budget and poses severe crawlability issues.

Determining Crawlability Problems

- Google Search Console analysis

- With the help of the Coverage and Crawl Stats reports, identify crawl errors and indexing problems.

- SEO crawling tools

- SEMrush, Screaming Frog, and Ahrefs are tools that mimic the operation of search engine bots and expose crawlability issues.

- Manual crawling

- Manual crawling can be done on your site with the help of a tool or browser extensions to learn how bots view your site.

- Server log analysis

- Bots interact with your site in logs, which help identify the crawl frequency and errors.

- There are types of crawl errors.

- The most common types of errors are 404 (Not Found), 500 (Server Error), soft 404, and redirect errors.

Crawlability Problems How to fix

Optimize Robots txt

- Make sure that significant pages are not blocked.

- Both enable search engines to access good content and block unwanted, irrelevant pages.

Fix Broken Links

- Test or replace dead links frequently.

- Provide the right redirects to direct users and bots.

Enhance Website Rapidity

- Optimize code and compress images.

- Make use of caching and content delivery networks (CDNs).

Minimize URL Structure

- Make use of clean descriptive URLs.

- Use no unnecessary parameters or long strings.

Enhance internal linking

- Connect all the significant pages with high authority pages.

- Provide clear anchor text to navigate more easily.

Use XML Sitemap

- Enter your sitemap on Google Search Console.

- Make it fresh with new information.

Fix Server Problems

- Keep track of uptime and server well-being.

- Enhance the hosting as required.

Best Practices to Improve Crawlability

- Have a reasonable site structure.

- Ensure mobile responsiveness

- Browse securely using HTTPS.

- Limit duplicate content

- Regularly audit your website

- Avoid unnecessary redirects

- Have essential pages in 3 clicks from the home page.

SEO Expert Advanced Tips

- Optimize crawl budget

- Target rich pages, block bad URLs.

- Use canonical tags

- Effectively consolidate duplicate content markers.

- Implement structured data

- Helps search engines learn more about what you have to say.

- Increase crawl speed

- Enhance the response of the server and minimize delays in loading pages.

- Detect crawler activity

- Surveillance of bot behavior can be done using server logs or analytics tools.

Crawlability, AI & Modern SEO (2026 Insights)

Does your site allow AI to crawl it?

Yes, artificial intelligence and search engines involve sophisticated crawlers that are used to analyze the content. Ensuring proper crawlability helps both traditional and AI-driven indexing.

Is ChatGPT a web crawler?

No, ChatGPT is not a crawler but uses indexed data and integrations to retrieve information.

Is it possible to carry out SEO audits with AI tools?

In fact, current AI tools may evaluate the problems related to crawlability, reveal their mistakes, and provide recommendations.

Ways to prevent AI crawlers

To keep AI bots away, you can use robots.txt and/or specific user-agent blocking.

Avoid crawler traps

Avoid cyclic URLs, redundant filters, and dynamic parameters.

Detect crawler activity

View logs of the monitor server and see what bots are accessing your site and at what frequency.

Learning about SEO Structure and notions

Four pillars of SEO

- Technical SEO

- On-page SEO

- Off-page SEO

- Content SEO

Three core pillars

- Technical

- Content

- Authority

Two main techniques

- White-hat SEO

- Black-hat SEO

The four common mistakes in SEO

- Technical errors

- On-page issues

- Off-page problems

- User experience issues

Crawling of websites at Google?

Yes, Google has bots such as Googlebot that crawl and index billions of web pages per day.

Is it possible to make Google crawl your site?

The process can be sped up by requesting indexing using Google Search Console and enhancing the crawling ability.

Which tools are website crawlers?

Web crawlers such as Screaming Frog, Ahrefs, SEMrush, and even Googlebot itself are web crawlers.

How to Raise Crawlability (Actionable Strategy)

- Make use of internal links.

- Make sure that it loads quickly.

- XML sitemap and robots.txt should be used properly.

- Remove duplicate content

- Repair all crawling errors.

- Use easy-to-remember URLs that are SEO-friendly.

- Enhance server response time and hosting.

What You Can Do To Prevent Bots From Crawling Your Site

- Block particular pages with robots.txt.

- Add “noindex” meta tags.

- Authentication of access.

- Block specific user-agents

Manual crawling of a website

Crawling a site manually will assist you in identifying crawlability issues very fast, and help you get a feel of the way search engines perceive your site.

Tools, such as Screaming Frog

Simulate bots of search engines with programs such as Screaming Frog or Ahrefs Site Audit.

- These are automated tools that scan your site and point out some important SEO problems.

- They play a crucial role in diagnosing technical faults effectively.

Enter Your Domain and initiate crawling

- Add your website URL to the tool.

- Get the crawl and allow it to search all available pages.

- The bigger websites can be completed in several minutes.

Compare the results to find the error and issues

- Check for broken links (404 errors) and redirects.

- Determine duplicate content and missing meta tags.

- Search pages that are overly deep or not properly linked.

Export Data to further SEO Analysis

- Export CSV or Excel report.

- Sift through important problems and focus on fixes.

- Share information with your team or use it to plan the improvements.

Web Crawler Traps how to Avoid Them

Web crawler traps are the most perilous crawlability issues since they may squander your whole crawl budget and deprive search engines of access to your valuable pages. These pitfalls are most often associated with situations when bots are trapped in some endless loops of URLs, filters, or dynamically generated pages.

Otherwise, crawler traps may reduce the rate of indexing, cause a strain on your server, and can do much to damage your search engine rankings. The best methods of preventing them are as follows:

Limit URL Parameters

The URL parameters (? sort=, ?filter=, or ? session=) allow various versions of one page to be generated, resulting in the creation of thousands of duplicate URLs.

- The search engine bots can crawl all of the variations, one at a time, consuming crawl budget.

- Excessive use of parameter-based URLs might cause search engines to lose track of the version to index.

- Manage these URLs with the use of Google Search Console parameter handling or canonical tags.

- Use clean URLs and do not have to include the unnecessary tracking or session parameters.

Shortening URL parameters helps to minimize duplication and also enables search engines to crawl your site in an efficient manner.

Dodge Chained Hen-paths

Infinite navigation occurrence occurs when your site creates infinite links with the use of filters, calendars, pagination, or faceted navigation.

- Example: There are combinations of URLs that can be formed by adding limitless filters on eCommerce sites (size, color, price).

- Bots might continue to crawl new variations until they arrive at significant pages.

- This has formed a circular process that burns crawl budget and brings great crawlability issues.

- Proper pagination, limit the combinations of the filters, and have nofollow on the non-needed links.

Controlled navigation structure makes sure that the bots navigate to only useful pages and do not get stuck.

Use Canonical Tags

Canonical tags serve to help search engines recognize which page is the primary (preferred) one of the varieties of the same page.

- Avoid having duplicate content due to similar or parameter-based URLs.

- Combine ranking signals under a single URL rather than separately distribute them on duplicates.

- Direct crawlers to the right version of your content.

- Have important pages always self-referenced in canonicals.

It is necessary to have a proper canonical implementation that prevents duplicate crawling and also enhances the overall crawl efficiency.

Block Unnecessary Dynamic URLs

It can create unlimited combinations of pages as a result of the dynamic URLs created by scripts, filters, or search functions.

- These links can have little or no value in terms of SEO, but are used up by crawlers.

- Block out low-value dynamic pages, such as search results or filter combinations,s with robots.txt.

- Apply “noindex” tags to prevent indexing of unimportant pages.

- External search engines should not see internal search pages.

The importance of blocking irrelevant dynamic URLs is to keep bots interested in quality content that is worth inclusion in the indices.

Is SEO Deaor Evolving in 2026?

SEO did not die; it has developed at a very high speed. The current search engines are based on AI, user intent, and experience-based ranking. Crawlability issues are a core aspect of successful SEO, even in 2026.

Search rankings will still favor websites that are technologically optimized, have good content, and a good d user experience.

Conclusion

The issues of crawlability are among the most neglected yet very potent aspects of SEO. When crawling of your site is not possible, then your content will never be ranked to its full potential.

With this knowledge of crawlability, recognition of the problems, and correct outlining of solutions, your webpage can be completely search-engine friendly. Beyond enhancing the speed of your site, better internal links, and ensuring that you avoid mistakes, each little action will reinforce your site and improve your search engine optimization.

In this competitive digital world, it is not a choice to fix the issue of crawlability, but a necessity to be successful in the long term.

Frequently Asked Questions

1. What are crawlability issues in SEO?

Crawlability issues refer to technical challenges that do not allow the bots used by search engines to enter and navigate through your website. These problems may prevent indexation of pages, and thus, this has a direct effect on your positions in the rankings and appearance on search results.

2. What can I do to enhance the crawling of my site?

To enhance crawlability, you can optimize the site structure, repair broken links, maximize page speed or speed, utilize an XML sitemap, and put the robots.txt file to prevent blockage of necessary pages. On-site audits of SEO also assist in identifying and correcting issues related to crawling within the least amount of time possible.

3. What do I do to verify that my site has issues with crawlability?

Crawlability issues can be detected with the help of such tools as Google Search Console, Screaming Frog, or Ahrefs. The tool identifies crawl errors like 404 pages, server errors, redirect loops, and blocked URLs that impact performance on your site.

4. Does my site have any issues with crawlability that would impact my ranking?

Yes, crawlability issues may greatly contribute to rankings. These pages will not be found in search results, even when optimized very effectively, because search engines cannot crawl or index them.

4 thoughts on “Crawlability Problems: Boost Your SEO & Rankings Today”

I am truly thankful to the owner of this web site who has shared this fantastic piece of writing at at this place.

Thank you so much!

We truly appreciate your kind words and support, glad you enjoyed it

Pretty! This has been a really wonderful post. Many thanks for providing these details.

Thank you so much!

We’re glad you enjoyed the post and found it helpful